Why need a Virtual Machine? Majority of the people are windows users. But it is not really convenient to run spark and Python on windows. So in such cases we need to create Linux Virtual Machine. Desktop virtualization software such as VMware gives ability to install and run multiple Operating Systems on your desktop. Installing Spark on Ubuntu. 2017-07-04 Linux Spark Andrew B. I’m busy experimenting with Spark. This is what I did to set up a local cluster on my Ubuntu machine. Before you embark on this you should first set up Hadoop. Download the latest release of Spark here.; Unpack the archive. Apache Spark can be run on majority of the Operating Systems. In this tutorial, we shall look into the process of installing Apache Spark on Ubuntu 16 which is a popular desktop flavor of Linux.

Apache Spark is a data analytics tool that can be used to process data from HDFS, S3 or other data sources in memory. In this post, we will install Apache Spark on a Ubuntu 17.10 machine.

https://detroitgreat.weebly.com/phpstorm-mac-keygen-opener.html. For this guide, we will use Ubuntu version 17.10 (GNU/Linux 4.13.0-38-generic x86_64).

Apache Spark is a part of the Hadoop ecosystem for Big Data. Try Installing Apache Hadoop and make a sample application with it.

Updating existing packages

To start the installation for Spark, it is necessary that we update our machine with latest software packages available. We can do this with:

As Spark is based on Java, we need to install it on our machine. We can use any Java version above Java 6. Here, we will be using Java 8:

Ubuntu 16.04 Download

Downloading Spark files

All the necessary packages now exist on our machine. We’re ready to download the required Spark TAR files so that we can start setting them up and run a sample program with Spark as well.

In this guide, we will be installing Spark v2.3.0 available here:

Spark download page

https://brownequi636.weebly.com/parallels-keygen-mac.html. Download the corresponding files with this command:

wget http://www-us.apache.org/dist/spark/spark-2.3.0/spark-2.3.0-bin-hadoop2.7.tgz

Bernina 830 instruction manual download. Depending upon the network speed, this can take up to a few minutes as the file is big in size:

Now that we have the TAR file downloaded, we can extract in the current directory:

This will take a few seconds to complete due to big file size of the archive:

Unarchived files in Spark

Dell 1720dn driver download. When it comes to upgrading Apache Spark in future, it can create problems due to Path updates. These issues can be avoided by creating a softlink to Spark. Run this command to make a softlink:

Adding Spark to Path

To execute Spark scripts, we will be adding it to the path now. To do this, open the bashrc file:

Add these lines to the end of the .bashrc file so that path can contain the Spark executable file path:

SPARK_HOME=/LinuxHint/spark

exportPATH=$SPARK_HOME/bin:$PATH

exportPATH=$SPARK_HOME/bin:$PATH

Now, the file looks like:

To activate these changes, run the following command for bashrc file:

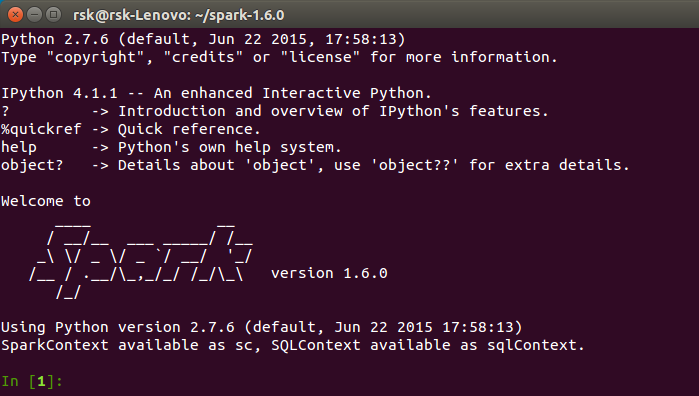

Launching Spark Shell

Now when we are right outside the spark directory, run the following command to open apark shell:

We will see that Spark shell is openend now:

Launching Spark shell

We can see in the console that Spark has also opened a Web Console on port 404. Let’s give it a visit:

Though we will be operating on console itself, web environment is an important place to look at when you execute heavy Spark Jobs so that you know what is happening in each Spark Job you execute.

Outlook mac app not showing status in email header. Check the Spark shell version with a simple command:

We will get back something like:

Making a sample Spark Application with Scala

Now, we will make a sample Word Counter application with Apache Spark. To do this, first load a text file into Spark Context on Spark shell:

scala> var Data = sc.textFile('/root/LinuxHint/spark/README.md')

Data: org.apache.spark.rdd.RDD[String] = /root/LinuxHint/spark/README.md MapPartitionsRDD[1] at textFile at :24

scala>

Data: org.apache.spark.rdd.RDD[String] = /root/LinuxHint/spark/README.md MapPartitionsRDD[1] at textFile at :24

scala>

Mixed in key 8 crack. Now, the text present in the file must be broken into tokens which Spark can manage:

scala> var tokens = Data.flatMap(s => s.split(' '))

tokens: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[2] at flatMap at :25

scala>

tokens: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[2] at flatMap at :25

scala>

Now, initialise the count for each word to 1:

scala> var tokens_1 = tokens.map(s =>(s,1))

tokens_1: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[3] at map at :25

scala>

tokens_1: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[3] at map at :25

scala>

Finally, calculate the frequency of each word of the file:

var sum_each = tokens_1.reduceByKey((a, b) => a + b)

Time to look at the output for the program. Collect the tokens and their respective counts:

scala> sum_each.collect()

res1: Array[(String, Int)] = Array((package,1), (For,3), (Programs,1), (processing.,1), (Because,1), (The,1), (page](http://spark.apache.org/documentation.html).,1), (cluster.,1), (its,1), ([run,1), (than,1), (APIs,1), (have,1), (Try,1), (computation,1), (through,1), (several,1), (This,2), (graph,1), (Hive,2), (storage,1), (['Specifying,1), (To,2), ('yarn',1), (Once,1), (['Useful,1), (prefer,1), (SparkPi,2), (engine,1), (version,1), (file,1), (documentation,1), (processing,1), (the,24), (are,1), (systems.,1), (params,1), (not,1), (different,1), (refer,2), (Interactive,2), (R,1), (given.,1), (if,4), (build,4), (when,1), (be,2), (Tests,1), (Apache,1), (thread,1), (programs,1), (including,4), (./bin/run-example,2), (Spark.,1), (package.,1), (1000).count(),1), (Versions,1), (HDFS,1), (D.

scala>

res1: Array[(String, Int)] = Array((package,1), (For,3), (Programs,1), (processing.,1), (Because,1), (The,1), (page](http://spark.apache.org/documentation.html).,1), (cluster.,1), (its,1), ([run,1), (than,1), (APIs,1), (have,1), (Try,1), (computation,1), (through,1), (several,1), (This,2), (graph,1), (Hive,2), (storage,1), (['Specifying,1), (To,2), ('yarn',1), (Once,1), (['Useful,1), (prefer,1), (SparkPi,2), (engine,1), (version,1), (file,1), (documentation,1), (processing,1), (the,24), (are,1), (systems.,1), (params,1), (not,1), (different,1), (refer,2), (Interactive,2), (R,1), (given.,1), (if,4), (build,4), (when,1), (be,2), (Tests,1), (Apache,1), (thread,1), (programs,1), (including,4), (./bin/run-example,2), (Spark.,1), (package.,1), (1000).count(),1), (Versions,1), (HDFS,1), (D.

scala>

Excellent! We were able to run a simple Word Counter example using Scala programming language with a text file already present in the system.

Conclusion

In this lesson, we looked at how we can install and start using Apache Spark on Ubuntu 17.10 machine and run a sample application on it as well.

Read more Ubuntu based posts here.

How to Install Apache Spark on Ubuntu 16.04 / Debian 8 / Linux mint 17. Apache Spark is a flexible and fast solution for large scale data processing. It is an open source distributed engine suitable for large scale data processing. Apache spark was founded by an Apache Software Foundation. It can be run on HBase, Hadoop, Cassandra, Hive, Apache Mesos, Amazon EC2 Cloud, HDFS, etc. It can run using its standalone cluster mode as well as on various cloud platforms.

Apache Spark Windows

Step-1 (Add the Java PPA)

Step-2 (Install the Java)

Update the apt-get repository

Install the Java installer

Step-3 (Install the Scala)

Create the Scala directory

Driver verifier windows 10. Download the Scala packages

Install the Scala packages

Step-4 (Install the Apache Spark)

Download the Apache Spark Tarball

Extract the Apache Spark Tarball

Copy the extracted packages to /opt/spark

Change the directory to access Spark Shell

Finally run the Spark Shell from Bash known as Scala Shell

Final Words

That’s all now you have installed the Apache Spark on your Debian based Linux distributions with ease. If you have any issues with above guides you need to use the comment section below.